For a few years now, it seems to me that more and more people in research have been using generative AI for quite a lot of stuff (bold take, I know).

At an ecology conference in 2024, I witnessed a lot of slideshows (including one of the plenary talks) illustrated with obviously AI-generated images, of varying quality. I, for one, have used some AI-generated images to illustrate my talk at a conference in 2023. At this time, and for a few months, I was really into making and looking at AI-art1 and was impressed with the possibilities it offered.

Recently, a colleague of mine showed me a map for a research project, and then told me she had prompted a Large Language Model (LLM) to write the R code that produced it. I was surprised, because the map was both very accurate and aesthetically quite good. By her own admission, she would not have been able to code it herself.

Last December, we had a special session in my lab’s Journal Club dedicated to AI. It drew in more people than any previous session2, and I realised how widespread the use of LLMs was (especially among younger members of the lab). There was a (mostly) shared enthusiasm about how AI could help scientists with their work, as long as they knew how to properly use it.

About that last point, I have a little story to share.

A hallucinated reference rears its ugly head

For that Journal Club “AI” session, the colleagues who organised the discussion had made a very nice little benchmark for use of AI for literature research. They asked several LLMs (among which: ChatGPT, Copilot, Gemini…), all with the same prompt, to list papers relevant to their favourite research subject3. Beforehand, they had identified some papers that they expected to be found in the AI’s answer.

Of course, not all LLMs recovered all the papers, there was some heterogeneity. Some articles were listed with the right title, but a wrong name for the first author. More disturbing: one of the LLMs (Gemini) listed papers that do not exist, that is: no paper with this title, by these authors, in this issue of this journal exists on the journal’s website.

Well. Hallucinations in LLMs are a common phenomenon. And that was just a fun experiment for a Journal Club so no big deal. Anybody doing this for a paper that is to be published in a peer-reviewed journal would double-check the list of references given by the AI…

Right?

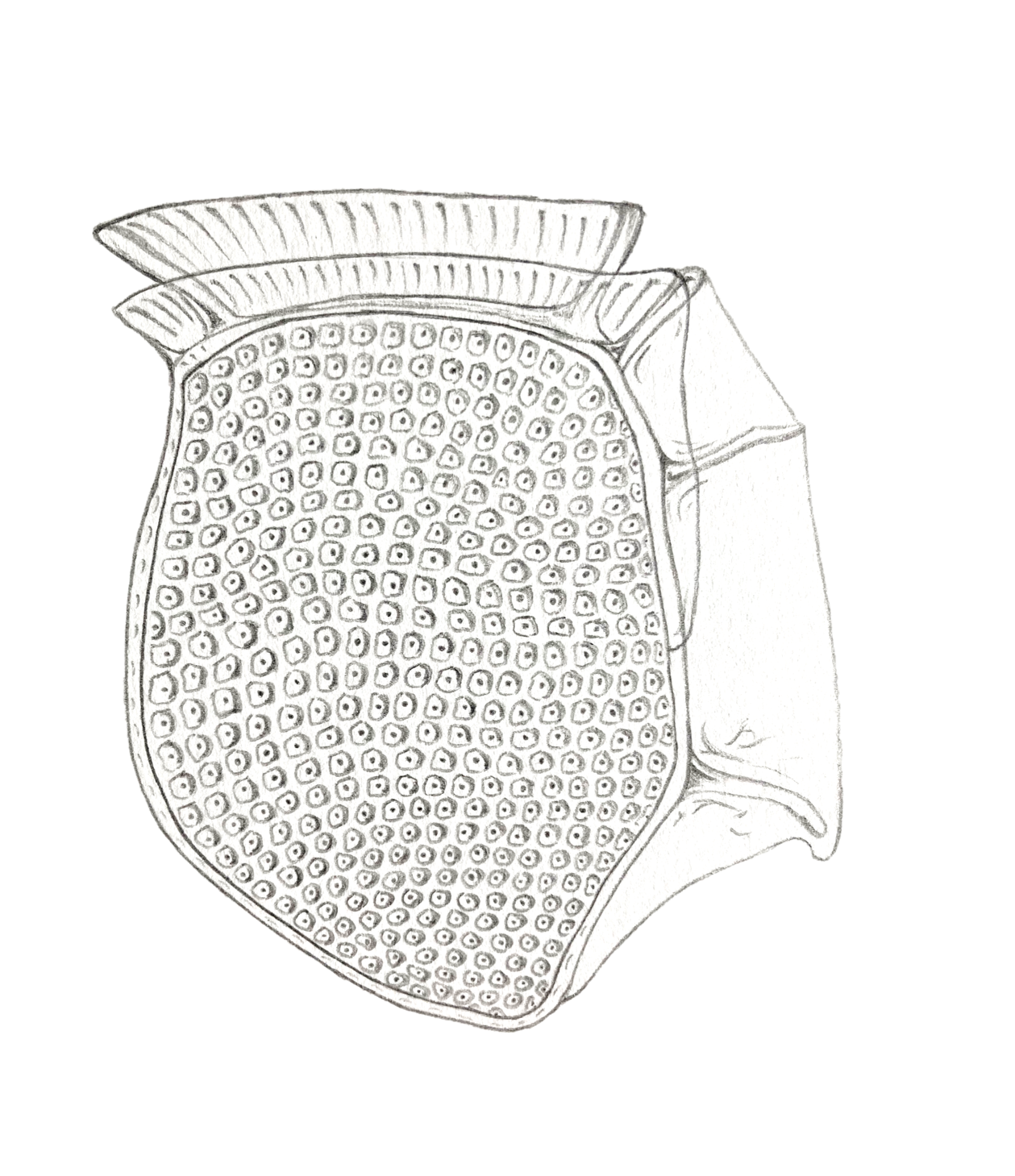

A few days ago, I came across a new(ish) paper about a little-studied dinoflagellate. How exciting! After a quick look, I go to the “references” section to see if the authors cited the work that some close colleagues published on this organism. Let’s say I was in for a surprise.

I know quite well the authors whose family names are listed on that reference [63]. I am also familiar with their work on the subject. Yet I didn’t know that paper. After a quick check, it appeared that the paper couldn’t be found on Google Scholar, and didn’t exist on the journal’s website. I emailed Roux, [P.] and Sourisseau, M. and they confirmed what I already knew: they had never written, let alone published, that paper.

I’m not the first one to witness this phenomenon. Three japanese computer scientists published a preprint in late January 2026 revealing that many (many!) papers published in the proceedings of computer science conferences contained such hallucinated references (Sakai, Kamigaito and Watanabe, 2026). I had heard about it before I stumbled upon that ghostly reference [63]. Still, finding this in my own field of research felt (weirdly) exciting!

What’s the problem with hallucinated references?

Let’s say you make a bold claim in your paper about that little-studied dinoflagellate, based on your interpretation of your results. Readers may think “Ok, they’re maybe putting the cart before the horse here”, but that’s fine – after all, a bit of healthy speculation is allowed (even encouraged) in science.

Now let’s say you make that same bold claim, and then back it up with half a dozen hallucinated references your LLM obediently wrote for you. Now the reader thinks “Wow, this seems wild but apparently it’s been validated by a lot of previous work! Let’s design a whole 6-months experiment based on that claim!”

For me, the biggest problem with hallucinated references is that they distort the literature on a given subject, and therefore give a fake impression of certainty. It’s made even worse by the “quality” of the hallucinated reference: in the above example the authors (except for Roux, [P.]’s initial), the title, year and journal were all very coherent with work that could have been published on the subject.

You could also imagine how it can be embarrassing for the “authors” of a hallucinated reference. What if, at my next job interview, my potential future boss asks me about a paper I didn’t write?

Who’s to blame?

Well, first and foremost, the authors of the paper with the hallucinated reference. They did not check their LLM’s output, and didn’t read the paper they cited (obviously). I chose not to identify the paper in question to avoid embarrassing them (although if you’re motivated, you will find it quite easily). More on the authors’ accountability in the following section “What to do now?”

However, I really don’t think the authors should be alone to take the blame here. The paper was published in an MDPI journal that is supposed to be peer-reviewed (emphasis important). So the hallucinated reference skimmed past an editor and a couple of reviewers who did not detect it. I don’t think they bear much responsibility, their role is not to scrutinise every reference and, as I wrote before, the reference seemed perfectly legit at first sight.

The ones who should (emphasis important) feel some shame on this matter, in my opinion, are the ones who should be accountable for what is published in their crappy journals i.e., MDPI, the publisher. It’s all the more damning that they let this kind of stuff through that it’s not so hard to detect hallucinated references once you know what you’re looking for (see the following section, “What to do now?”). I guess the process could even be automated quite easily, although I confess I’m no expert on that matter.

Maybe I’m being unfair to MDPI, and because the issue emerged recently4 (the preprint about hallucinated references in computer science was published on arXiv on January 26) they will quickly take action to prevent this. It’s the least they could do for their readers.

What to do now?

A week ago, I sent a polite email to the corresponding authors of the incriminated paper, laying out my concerns. I told them that I didn’t question their scientific integrity regarding the work they presented in the paper (which I consider to be interesting and relevant to our field, honestly), but that the hallucinated references were a serious problem. They replied quickly, saying that they agreed with me and that they would contact the journal’s editor and take steps to make it right (I presume, by issuing a correction.) As of today (2026/02/25), I’ve not heard back from them and the online version of the paper has not been corrected. I will update this post if (when?) there’s something new.

I think the main thing we can learn from that is: always check your LLM’s output. I don’t think use of LLMs in research is going to stop anytime soon, I don’t even think it’s inherently bad to ask an LLM to find relevant papers for you. But you have to double check and, wild idea, to read the papers yourself. Otherwise, you’re setting yourself up for this sort of mishaps.

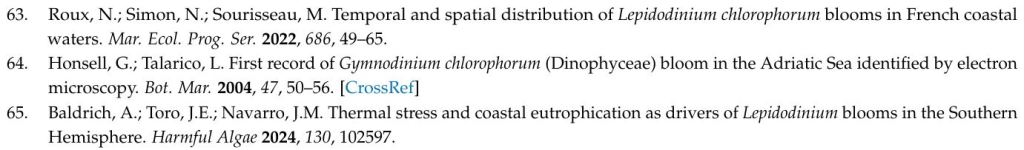

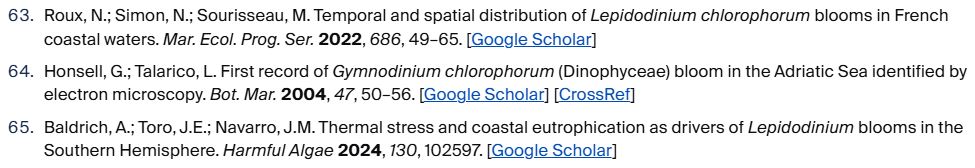

Also, as readers, we should now be aware that hallucinated references exist. This means we have to be more vigilant when reading a paper, especially when references in the text are in the “numbered” form (e.g. [1])5. If you look at the image below, you can notice that the hallucinated ref [63] lacks the [CrossRef] hyperlink:

Ref [64], which exists, has the CrossRef link. Ref [65] doesn’t… and is also, to the best of my knowledge, a hallucinated reference. So that’s a clue that you may be facing a hallucinated reference (in MDPI’s reference format at least). It’s not an absolute proof though, as very old references (e.g. taxonomy monographs) also lack the CrossRef link. If you click on the Google Scholar link of the hallucinated references, the search engine will tell you it found nothing6.

Given the speed of AI development, it’s very much possible that in 6 months, LLMs hallucinating references won’t be a problem anymore. But the core truth will remain: if you’re using AI (or any other tool) for your work, learn how it functions, and make sure to use it right.

Finally (and I’m going on a bit of a tangent here), a personal advice to authors: don’t submit your work in MDPI journals. (Or Frontiers, they’re basically the same, or the new crap from Springer/Nature, who must have thought they were missing out on some easy money.) I know this may seem a bit categorical, because:

1) major fuckery also happens routinely at more traditional, “established”, “””respectable””” publishers (let’s just say Elsevier, Wiley and co. know who they are.)

2) they offer open access publication for a reasonable price (by industry standards), and it’s important to publish open access.

3) some good work was (still is?) published in Frontiers and MDPI, and their reputation hasn’t always been that bad. They had to at least try to appear serious at the start, to lure respectable authors in. But now, they’re basically predatory publishers with (slightly) better branding. (See this analysis of MDPI’s numbers by Paolo Crosetto. Also, this response to Frontiers’ response to an inquiry into their publishing model, which contains some real gems.)

Some of my colleagues and friends, who I respect as scientists, published in MDPI journals. I’d argue this is never the right choice. Want to publish open access? Go for PLoS, they’re not nearly as bad, probably because they’re a non-profit. Need to get something out quickly? Publish a preprint! Want to stick it to the traditional cartel of scientific publishing be a hippie? Go for PCI!

Right now, publishing a paper in MDPI is like telling me you hid a slice of chocolate cake in a field full of dog shits. If I know you really well I might trust you enough to take a bite. But if I don’t, there’s no way I’ll take the chance.

This blog post is under a CC BY license.

I have no conflict of interest that could influence my opinions on any specific publisher. All opinions expressed are my own.

- I used to do it with the website Nightcafé, which was quite fun back then, but I don’t know how it evolved. ↩︎

- Why people are so eager to discuss AI and not a new paper about some weird feature in my favourite phytoplankton is beyond me… ↩︎

- For those interested in replicating the experiment, here’s the prompt they used, translated to English: “Find me articles about the impact of nutrient limitation on the production of prymnesins in Prymnesium parvum.” They knew this type of simple prompt is suboptimal, but going for the simplest stuff seemed most relevant for the experiment. ↩︎

- Although AI crap (and human-made crap, for that matter) finding its way through the “rigorous” peer-review process of these exponentially-growing for-profit publishers is nothing new. If you don’t already know the story of the Frontiers rat with the giant AI penis… I envy you, because you’re in for a funny read. ↩︎

- Actually, that’s now another pretty valid argument for using the more traditional form of references (“Dupont et al., 1999”) ↩︎

- Interestingly, the google scholar link for ref [64] here also ends up to a “no result found” query. That’s because the title in the reference is erroneous (“microscopy” instead of “microscopical observation”). So not an absolute criterion either (although in this case it’s yet another indication of poor reference management by the authors.) ↩︎

Leave a comment